Rank & Select

Last week: Models of computation Link to heading

- Big-O notation

- Word RAM model

- Applications & limitations

Today Link to heading

- Bit vectors

- storing bits

- Rank

- counting 1-bits

- Select

- finding 1-bits

- Engineering Rank

- making it fast

- Elias-Fano coding

- storing integers efficiently

Bit vectors Link to heading

A static bit vector is an implicit data structure storing \(n\) bits \(b_0, \dots, b_{n-1}\) with constant overhead.

- \(\mathsf{get}(i)\): returns \(i\)’th bit \(b_i\)

Implemented using e.g. Vec<u64>.

| |

Little Endian: the low-order bits are first in memory.

\[ \newcommand{\rank}{\mathsf{rank}} \newcommand{\select}{\mathsf{select}} \]

Binary Rank & Select Link to heading

A rank query on a bit vector counts 1-bits before a position \(i\) (for \(0\leq i\leq n\)).

- \(\rank(i) := \sum_{0\leq j < i} b_j\)

A select query on a bit vector returns the position of the \(j\)’th 1 (zero-based, for \(0\leq j < \rank(n)\)).

- \(\select(j) :=\) index \(i\) such that \(b_i=1\) and \(\rank(i) = j\).

Also: \(\rank_0\) and \(\select_0\) for counting/finding 0-bits.

What is \(\rank_1(\select_1(j))\)?

And \(\select_1(\rank_1(i))\)?

\(\rank_1(\select_1(j))\) equals \(j\).

\(\select_1(\rank_1(i))\) finds the position of the first 1 at position \(\geq i\).

Binary Rank & Select: Goal Link to heading

There exists a data structure that answers rank and select queries:

- in constant \(O(1)\) time,

- using succinct \(o(n)\) bits of additional space.

Rank: first steps Link to heading

Compute the answer on-demand:

- No space overhead

- \(O(n/\log n)\) query time

- We pop-count \(w=\Theta(\log n)\) bits at a time.

Pre-compute and store all answers:

- \(n\) words overhead

- \(O(1)\) query time

Rank: Binary search Link to heading

Store positions of all 1-bits. Then binary search to get the number of them at position \(\lt i\):

- \(k\) words overhead, for \(k := \rank(n)\).

- \(O(\log k)\) query time

Rank: Checkpoints Link to heading

Store a checkpoint every \(s\) bits: \(R_k := \rank(k\cdot s)\).

- \(n/s\) words overhead

- \(O(s/\log n)\) query time: \[\rank(i) = R_{\lfloor\frac is\rfloor} + \sum_{s\lfloor\frac is\rfloor \leq j < i} b_j\]

For \(s=w = \Theta(\log n)\):

- \(n/w\) words, or \(n\) bits overhead.

- Compact, but not succinct

- \(O(1)\) queries

- Adjacent checkpoints \(R_k\) are similar!

Rank: A succinct version (Jacobson 1988) Link to heading

- Blocks of size \(s = w\).

- Super blocks of size \(s’ = w^2\).

- Store \(w\)-bit super block ranks \(R’_k := \rank(k\cdot s’)\).

- \(\lceil n/s’\rceil \cdot w = O(n/\log n) = o(n)\) bits

- Store \(\log_2 s’\)-bit block ranks relative to super block:

\[R_k = \rank(k\cdot s) - R’_{\lfloor k/w\rfloor} \leq s’.\]

- \(\lceil n/s\rceil \cdot \log_2(s’) = O(n/w \cdot \log_2 w) = o(n)\) bits

- Constant time queries: \[\rank(i) = R’_{\lfloor i/s’\rfloor} + R_{\lfloor{i/s\rfloor}} + \sum_{s\lfloor\frac is\rfloor \leq j < i} b_j\]

Rank: Emulating popcount Link to heading

- Originally, constant time \(w\)-bit popcount was not assumed.

Instead, we can take \(s = (\log_2 n)/2\), and pre-compute popcounts for all \(2^s\) masks:

- \(2^s = \sqrt{n}\) patterns

- \(s\) offsets per pattern

- \(\log_2 s\) bits per count

\[2^s \cdot s \cdot \log_2 s = O(\sqrt n \cdot \log_2 n \cdot \log_2\log_2 n) = o(n).\]

Engineering Rank: minimizing space usage Link to heading

In practice, we don’t need succinct. Compact with small constant overhead is enough. See QuadRank slides.

- \(s=512\): Store a 64-bit checkpoint every 512-bits of data:

- \(64/512 = 12.5\%\) space overhead;

- pop-counting 512 bits is sufficiently fast.

- Smaller, \(s’=2^{16}\): 16-bit checkpoints, and \(s’=2^{16}\)-bit super blocks storing 64-bit

checkpoints. (Kurpicz 2022)

- \(16/512 + 64/2^{16} = 3.2\%\) overhead.

- Faster count: checkpoints to the middle of each block: (Gottlieb and Reinert 2025)

- popcount only 256 bits.

- Fewer cache misses: store checkpoints inside each block: (Laws et al. 2024)

- 1 instead of 2 cache misses

- \(16/(512-16) + 64/2^{16} = 3.3\%\) overhead.

- Simple & fast classic: Rank 9 (Vigna 2008)

- 64-bit blocks with 9-bit checkpoints; 7 of them make a

u64.

- 64-bit blocks with 9-bit checkpoints; 7 of them make a

Select Link to heading

Assume we have \(k\) 1-bits.

- Compute all answers on-demand: \(O(n/\log n)\) queries.

- Store all answers: \(O(k)\) words.

- Sufficient if \(k = o(n / \log n)\).

The position of \(i\)’th 1-bit in a word can be found in constant time (in the “modern” word RAM model) using

| |

which uses pdep and tzcnt instructions (wikipedia, deposit_bits).

Succinct Select (Clark and Munro 1996) Link to heading

- Variable-sized super-blocks \(B_i\) containing \(b:=\log ^2 n\) 1-bits

\[\select(i) = \left(\sum_{0\leq j < \lfloor i/b\rfloor} |B_j|\right) + \select(B_{\lfloor

i/b\rfloor}, i - b\lfloor i/b\rfloor).\]

- Prefix sums take \(n/b \cdot w = O(n/\log n) = o(n)\) bits.

- Sparse blocks with \(|B| \geq \log^4 n\):

- Store answers directly in \(bw = \Theta(\log^3n)\) bits.

- \(\log^4\) bits in block, so \(o(n)\) overall.

- Dense blocks with \(|B| < \log^4 n\): next slide

Dense blocks with \(|B| < \log^4 n\):

Split again into variable-sized blocks of \(b’=\sqrt{\log n}\) ones.

- Prefix sums take \(\log \log^4 n\) bits per element, \(O(k/b’ \cdot \log \log^4 n) = o(n)\) total

- If sparse, size \(\geq \frac 12\log n\):

- Store \(b’\) \(\log_2(\log^4 n)\)-bit numbers, \(o(\log n)\) total

- If dense, size \(<\frac 12\log n\):

- Use

select_in_word - Clark’s select stores a lookup table like for popcount.

- Use

To answer \(\select(j)\):

- Find the super block \(\lfloor j/b\rfloor\).

- If sparse: read answer.

- If dense: find block \(\lfloor j/b’ \rfloor\).

- Read offset of superblock.

- Read offset of block inside superblock.

- If sparse block: read the position relative to the block.

- If dense block: popcount inside the block.

There exists a data structure with \(o(n)\) space overhead that allows \(O(1)\) rank and select queries.

Select in practice: Darray Link to heading

- Super blocks containing \(b = 2^{10}\) ones.

- Sparse if length \(\geq 2^{16}\)

- Use \(2^6=64\) bits to encode each position; \(64\cdot 2^{10} = 2^{16}\) bits

- Dense blocks (len \(<2^{16}\)):

- Store position of every \(2^5=32\)’nd 1, relative to start (10 bits each).

- Linear scan (\(<2^{16}\)) from there to find the exact answer.

- The last sparse/dense split from Clark’s select (previous slide) is skipped.

Further reading Link to heading

- QuadRank slides and paper (Groot Koerkamp 2026)

- Ben Langmead’s teaching material on bitvectors, rank, and select.

- With YouTube videos.

Next: Elias-Fano coding Link to heading

Bibliography Link to heading

References Link to heading

Possible exam questions Link to heading

- What does a rank query return? And select? How do they relate to each other?

- What is the main technique used to obtain a succinct rank data structure? And for select?

- Name a few ways in which practical rank methods differ from the Jacobson’s theoretical version.

- How does Darray differ from Clark’s select?

- Which modern CPU instructions can be used for rank and/or select? What older techniques do they replace?

- For both rank and select, why does a single layer of blocks not suffice to obtain \(o(n)\) space and \(O(1)\) query time?

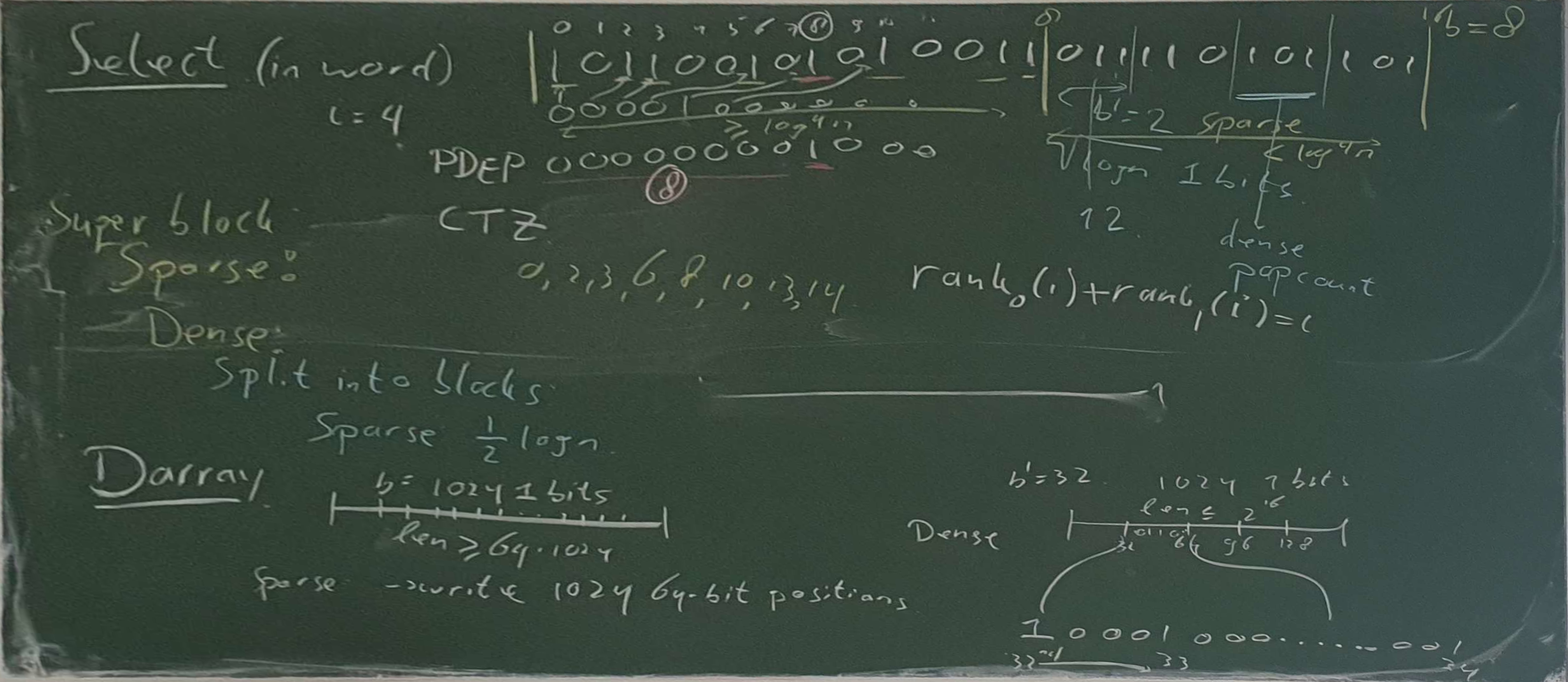

Blackboard: Bit vector & Rank Link to heading

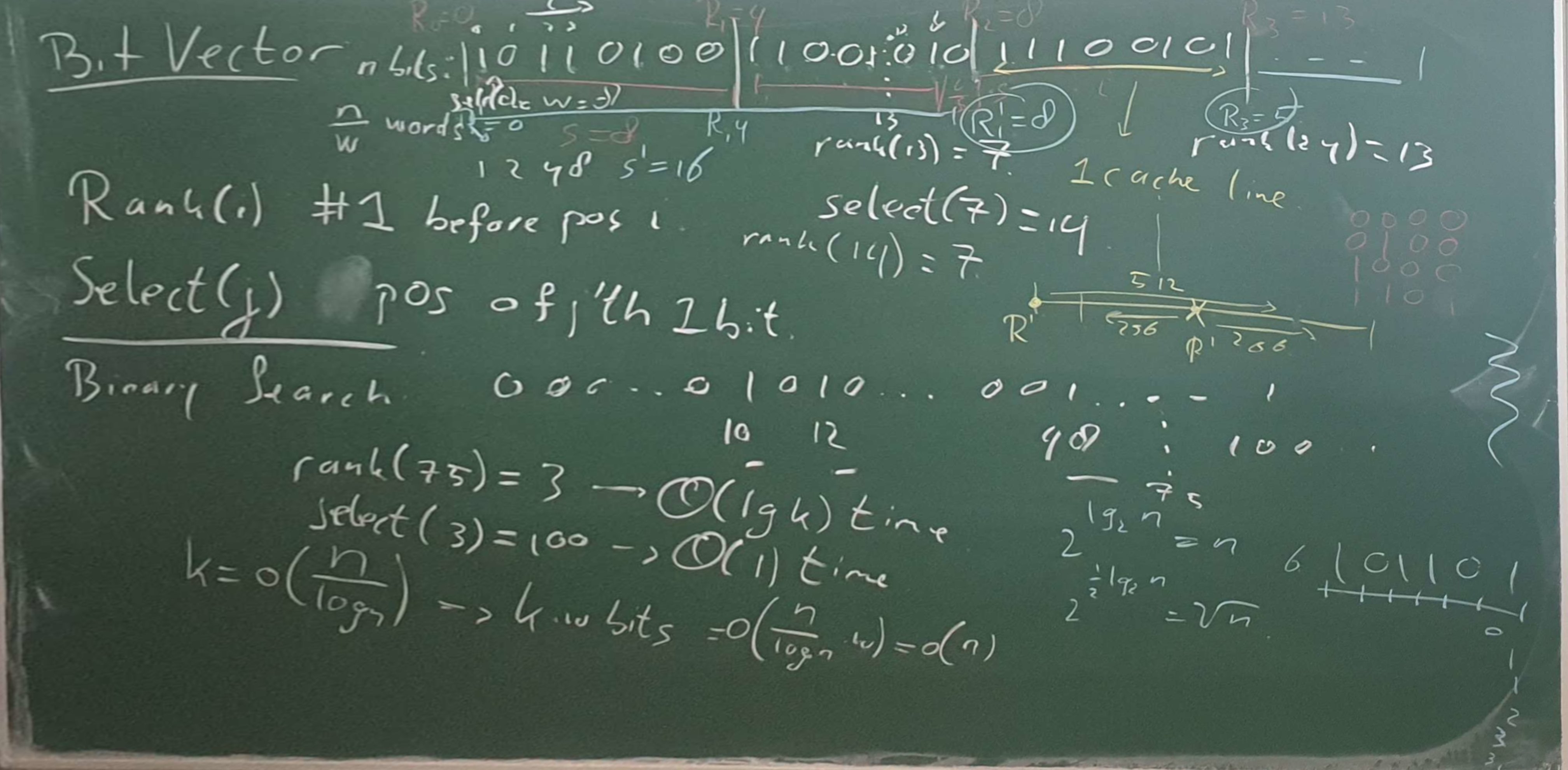

Blackboard: Select & Darray Link to heading